ABI is a simple software for the ModularEEG (http://openeeg.sourceforge.net) that implements an experimental Brain Computer Interface (BCI). Nowadays, BCI research is an highly active field, but the existing technology is still inmature for its use outside of a lab's settings. The ABI software tries to provide a simple tool for hobbyists to do experiments on its own with BCI technology.

| Screenshot | Download |

|

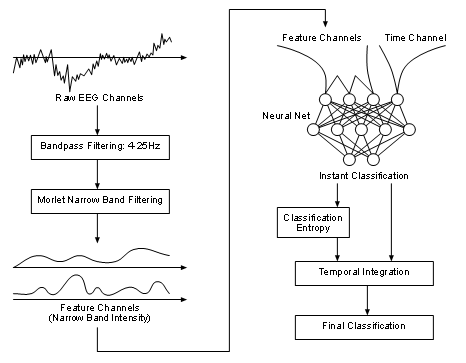

The ABI is a BCI based on trials. A trial is a time interval where the user generates brainwaves to perform an action. The BCI tries to process this signal and to associate it to a given class. The association is done by feeding a neural net with the preprocessed EEG data. The neural net's output is then further processed and this final output corresponds to the given class. The neural net should be trained in order to learn the association.

The classifier's idea is heavily based on Christin Schäfer's design (winner of the BCI Competition II, Motor Imaginery Trials).

The ABI software allows you to

The method has been previously applied to the data provided by the BCI Competition II data (dataset III, Graz University, Motor Imaginery) and compared against the results obtained by the contributors. The method has outperformed the results achieved by them, obtaining a higher Mutual Information (which was the criterion used in the competition) of 0.67 bits (the winner of the competition obtained 0.61 bits).

Of course, it is very important that more people test the software and report its results to improve the method. Statistical stability can only be guaranteed if more people try it out.

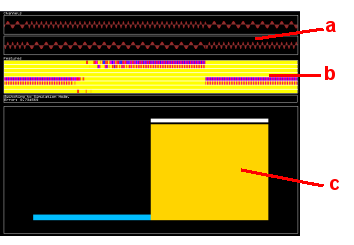

By executing ABI, it reads a configuration file called "abi.txt" (which you can edit with a simple text editor), where the way the BCI should act is specified. ABI tries to load the trial file defined in the configuration file. The trial file is a text database containing trials for different classes. Then, the main screen is displayed:

ABI has three operating modes: SIMULATION, RECORDING and TRAINING. You can switch between operating modes by pressing F1, F2 or F3 respectively (the software doesn't change its mode instantly, because a trial shouldn't be interrupted in the middle).

The operation is quite simple. The user records several trials for the different classes (RECORDING mode). Each class is associated to a different mental task. After recording a reasonable amount of trials (more than 50 trials for each class), the user can train the system to learn a way to discriminate between the different classes (TRAINING mode). This process can be repeated in order to improve the quality of the recognition. The system can be tested under the SIMULATION mode.

An explanation of the different modes follows.

These two modes perform single trials. The SIMULATION mode is used to test the BCI. RECORDING is the same as SIMULATION, with the difference that the EEG data is recorded and used as training examples. A trial has the following structure:

As you can see, a trial is composed of three subintervals, whose duration is defined by the variables TPreparation, TPrerecording and TrialLength, in the configuration file.

When exiting ABI, the EEG data recorded so far is saved into the file given by the parameter TrialArchive. You can open a trial archive with a simple text editor and see how the trial data has been recorded. Only raw EEG data and the class label is recorded: the features, which correspond to the real training data, are computed on-the-fly.

If you start ABI, it will load the trial archive specified in the configuration file if it exists, or create a new one if not. If the trial archive doesn't match the configuration file's specifications, then ABI aborts its execution. So you have to be careful to use correct trial archives and configuration files.

EEG recording between different executions of the ABI system is appended to the trial archive. This allows you to build your training set in different sessions. Be careful to use the same electrode settings. Some have reported that the recognition rate drops between different sessions.

The configuration file tells ABI where to load the trial data from, how many channels the system should use, which features it should use, etc. You can open it with your favourite text editor and edit it. To start ABI with a different configuration file other than the default "abi.txt", invoce ABI with the following syntax at the command prompt:

> abi <my_configuration_file>

The configuration file basically contains a list of variables. The list of variables is:

| Variable Name | Description |

| Device | This is the string that tells ABI how to initialize the ModularEEG. You shouldn't change it |

| NChannels | The amount of channels to use. |

| NFeatures | The number of features the ABI should extract from the raw EEG data in order to feed the neural net. |

| NClasses | The number of classes that the system should be able to discriminate. |

| TrialArchive | The name of the associated trial archive. |

| Channels | The index list of source channels for the feature extraction. |

| Frequencies | The list of frequencies to extract from the EEG data to use as features. |

| HiddenUnits | The number of hidden units of the neural net. |

| TrialBuffer | The size of the training set used to train the neural net. |

| TrialLength | The length in seconds for each trial. |

| TPreparation | The length in seconds for the preparation time. |

| TPreRec | The length in seconds for the prerecording time. |

A variable and its value should be in the same line.

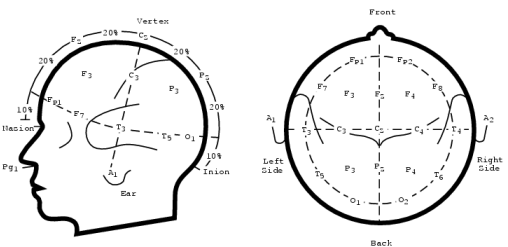

As a reference, this is the international 10-20 system:

If you want to check if the software is actually doing something, try the following simple test. This isn't a real BCI test, it's just for testing purposes.

Try to control the bars by simple teeth grinding. This is quite simple. Using just one channel over the frontal area (Fp1 and Fp2 per example), you can train ABI to discriminate between 2 different classes. Copy the following ABI configuration and start the system.

| test.txt |

Device = port COM1 57600; fmt P2; rate 256; chan 2; NChannels = 1 NFeatures = 4 NClasses = 2 TrialArchive = test.txt Channels = 0 0 0 0 Frequencies = 8 14 20 30 HiddenUnits = 4 TrialBuffer = 30 TrialLength = 5 TPreparation = 4 TPreRec = 0.5 |

Now, enter the RECORDING mode by pressing [F2]. Grind your teeth when the system asks you to perform the mental task associated to class 1 (the left bar). Relax for class 2. After recording 10 trials for each class, train the network by pressing [F3]. Wait until the classification error drops to a reasonable amount (per example, 1.2 bits). Then, enter the SIMULATION node by pressing [F1]. Repeat the same as when you've been recording. The system should classify the trials correctly: when you grind your teeth, the left bar should be higher than the right one, and viceversa.

First of all, be patient! The system tries, by using a trainable classification method, to adapt the BCI to the user, and in this way, to simplify the learning process required by the user. Nevertheless, as any other instrument, it requires a considerable amount of time to use the BCI in order to get nice results.

BCI technology is still in its infancy, so little is known about which mental tasks are better than others for BCIs. Also, the electrode placing is important. If your electrode setting isn't appropiate, then it can happen that they even aren't recording the cortical areas related to the mental task!

Research has discovered the following changes in electrical activity during mental tasks (this list isn't complete, I hope that the OpenEEG community will discover some more):

Do not use too many features at the same time, 4-10 features are reasonable. If you want to change the used features, restart the BCI with the appropiate change in the configuration file.

Please report your experiences with this system. If you handle to find good mental tasks and its electrode positions, please report! Since this field is new, every little discovery could help to improve the technology. You really can make an important contribution to this field of research!

Good trial files are welcome. If you've recorded EEG data that could be successfully classified, then it would help to further analize the contained patterns. Remember to specify the mental tasks, features and electrode positions.

The design of the classifier is heavily based on Christin Schäfer's design used for the Dataset III of the BCI Competition II. Instead of using a Gaussian multivariate Bayesian classifier, here we use a neural net to obtain the classification for each time instant t. Those outputs are then integrated in time using a weighted sum. The idea is simple: outputs with low confusion should have higher weights.

These are the different steps:

Pedro Ortega <peortega@dcc.uchile.cl>

Santiago de Chile, January 16, 2005.